A Year of Having No Work Access on My Personal Phone

For many, the end of 2021 and start of 2022 has been a time of reflection and comparing for many of us. It doesn’t help that this year social media sends us flashback reminders of prior years. Through the pandemic some of these has prompted feelings of hope for us, such as photos of holidays and family events. Nevertheless, reminders of 2020 events have shown times of great strife and regret that we would probably care to forget.

I also have similar experiences when flipping through prior blogs. They can help generate new thoughts and insights for me, which I always appreciate with a wry smile. A recent unpublished blog has got me thinking about back to January 2021, when I made the decision to remove all work applications from my phone. It was an easy decision to make at a time where I was burned out and our family were struggling with isolation after spending our first Christmas and Hogmanay, or New Years for those non-Scots reading, locked down in London away from friends in family in Scotland. I remember coming back from a two-week vacation with an uncomfortable static buzzing continually prickling through my brain, and black raccoon-like bags weighing down my eyes.

Phone are not just for chatting anymore!

It is now one year later. As I come back from a truly relaxing break with my family, embracing the joy and giggles of a happy toddler eagerly playing with his grandparents and cousins, I am glad I didn’t finish and hit send on that piece. Time and distance have helped me consider the decision in a more pragmatic way, and truly see the benefits this has given me for my work and home life separation. I did make other changes. Moving role, adding night off Thursdays to write or lounge in the bath, and ensuring I prioritise work items that I enjoy over the slog of the next item on the to do list were great aids to my mental health and wellbeing. But the most impactful one was definitely wiping all work apps, including email, from my phone.

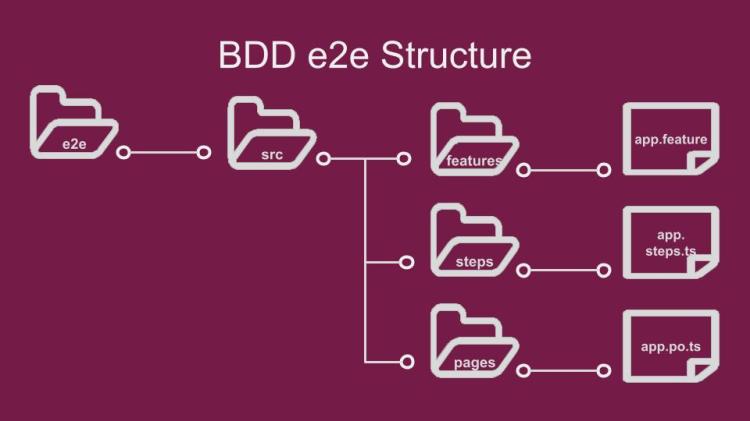

In the midst of my return to work, I also had a conversation with a mentee who was considering installing works apps, including email on their phone. Over the years I’ve had many of these conversations with recent graduates, and each time they make me think about how keen we are to plug into the matrix of work before considering the consequences. Here I share the drivers that caused me to refute Bring Your Own Device, or BYOD, as a productivity driver, and share tips and tricks to help you decide whether unplugging from the work Matrix will work for you.

Why Do Fools Fall in Love?

The tale of how I came to be plugged in is probably an experience shared by many engineers in their initial rise through the ranks. I received my first dedicated work handset a few months after starting after a late night in the office monitoring report submissions. At this time, it made sense as it allowed me to monitor from home without needing to login from the office, or even connect remotely from home.

When the company adopted BYOD a few years later, it seemed like a natural thing to immediately adopt since I was used to having some connection to work. Despite discussion with a good friend about their decision to not adopt BYOD on returning their device, I decided to keep my access. At this point I had formed the bad habit of checking emails on my commute and at home in the evening, much like many others as discussed in this piece from 2015. I told myself it helped me see what I would face the next day and form a plan of what I needed to tackle when I made it to the office.

In hindsight keeping an eye on work emails through maternity leave was probably not very healthy!

I even continued this pattern while on parental leave. I told myself at the time that it was a useful means of keeping connected to the project to which I would be returning. I listed it as a valid learning mechanism for keeping up to date. It also formed my opinions about email as a bad medium for software alerts, which I reflected on at the time, as I found test environment alerts flooded my inbox making it difficult to find useful content. In hindsight, I now consider it more to be a symptom of FOMO and fear of being forgotten by my team. Especially since I was moved project on return to work, the time does feel wasted.

When the pandemic hit, I added our chat app to my phone to ensure I was contactable at times where I was looking after my son. I’m sure any parents reading will remember the challenge of that time. I found this constant connection contributed to a rather nasty burnout in December 2020. I have vivid memories of one lunch time where my phone was buzzing off the table with messages from my team to try and action an urgent approval while I tried to feed a weaning baby. I also found that many of us as we adapted our work hours around personal circumstances defaulted to using chat apps for everything, leading to notifications late at night for non-urgent queries.

Maintaining a work connection on my phone meant I was always on when working from home

It was clear to me I was no longer reaping that perceived benefit of preparing for the next day. Instead, it made it difficult to sleep as I thought of the next problem I would deal with in the morning. I had been giving advice to colleagues, graduates, mentees and team members at that time to think about if they should have this connection to work. Yet I never took my own advice through that time. It was this realisation that led me to pull the plug and uninstall all work apps from my phone.

Who’s Zoomin’ Who?

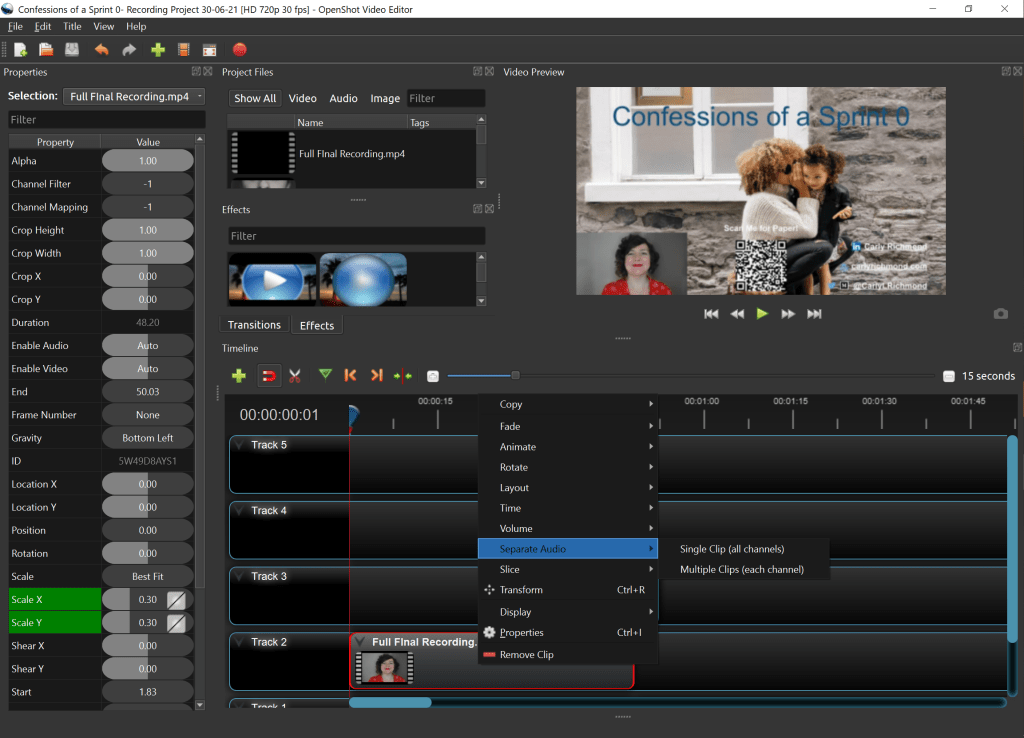

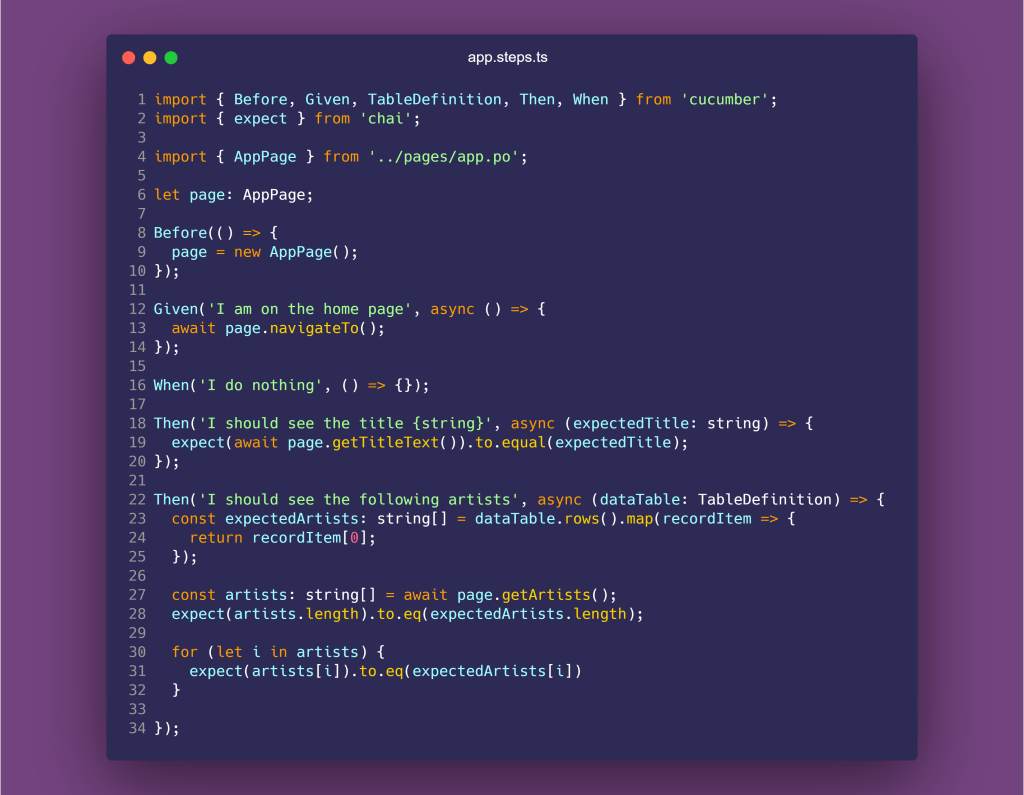

My story may resonate with you at this point. It may not quite have you convinced. If you fall into the latter camp, you may now be rhyming off the reasons you need to maintain your work connection after you log off. I’ve been there! I found that noting down when and what I did on my phone in a couple of days and compared them to my motivations for having the access, was helpful. I ended up with something a little like this:

I regularly told myself that I needed to be connected to help plan my working day on my commute, but also to ensure I could support my clients and cover support emergencies as they arose. I now see from the above listing that it had evolved to searching for issues that it became a problem. Or as an attempt to multi-task when I really should have been focusing on picking up the food Mini R was enjoying throwing around the floor. Part of the problem in that latter scenario is since you don’t really have context of the message, you don’t know if it’s ignorable or not. Meanwhile, now that I am only contactable via a phone call, I’ll only be contacted for true DEFCON 1 instances, leaving me time to focus on lunchtime.

The other opinion you may have, which many new developers and myself at one time have, is that you don’t have anything else to monopolize your time. Therefore, occasionally checking work while chilling with Netflix isn’t the biggest of deals, and not a major inconvenience on my time. While I do agree that I no longer fit in this position, and now that I have a family my time is more important, I do have some regret of the things I could have used that time for instead of working.

Among other things, I wish I had taken more bubble baths instead of being on my phone

Perhaps I could have written more. Or took part in more spare time coding. Or taken many more relaxing baths, which I now wish I had the time to do. Or had more uninterrupted conversations with my partner instead of us both sitting glued to our phone while watching TV. Or even gotten through an extra book or two if I did want to ignore Mr R watching the football. Be more respectful of our time outside of work. Even if it works out at around 47 minutes a day.

Constructing the Connection

I know I won’t have convinced all of you. I may be lucky if those determined to maintain access to work on their personal devices have even read to this point. New features are available on our phones to help us manage application time more effectively, which either didn’t exist or I didn’t know about when I was a member of the BYOD club.

These days you will not find any reference to work on my phone, and I’ve never been happier

My viewpoint will definitely not resonate with everyone, so if you are in the pro-BYOD camp, I do have some tips to help you avoid some of the challenges I faced before I pulled the plug on BYOD:

- Think about your role and the potential reasons people may wish to contact you. This can help you triage messages and decide which are DEFCON-1 panic stations compared to the sleepy normality of DEFCON-5.

- Avoid blindly downloading all available applications or setting up all media on your phone. Take a moment to think about which applications you truly need to access based on the reasons you have previously identified.

- Block out your evenings on your calendar to protect your evenings from calls. This is particularly important for those working for global organisations outside the US.

- Set a limit of the amount of time you spend on these apps outside of work, both at evenings and weekends. Try setting usage limits or focus time settings for those applications to enforce your limits. Some apps allow you to do this within the software itself, but Android and iPhone have various wellbeing options that you can use to limit applications, and set do not disturb settings for overnight.

- When using these applications outside of this time, be mindful of your own usage of chat versus email for any messages that you send to avoid distracting others. Try to respect the decision of others.

- Preferably try to have access free days at the weekends to get a true break from work.

- Consider having your phone number available in the company directory or shared with relevant colleagues for true emergencies. This is one that I have kept as a compromise.

Irrespective of your choice, no way is right or wrong. I know that having that separation is definitely the right decision for me. I wish I hadn’t rushed into connecting to the matrix so quickly. Together we need to respect the decisions of those who are unplugged versus plugged into work all the time. What is more important that we take care of the wellbeing of colleagues in both groups and be on the lookout for burnout in ourselves and others.

Thanks for reading! Best of luck finding the work connectivity that works for you. Do reach out if you would like to share your experience or want to discuss which option may be right for you.